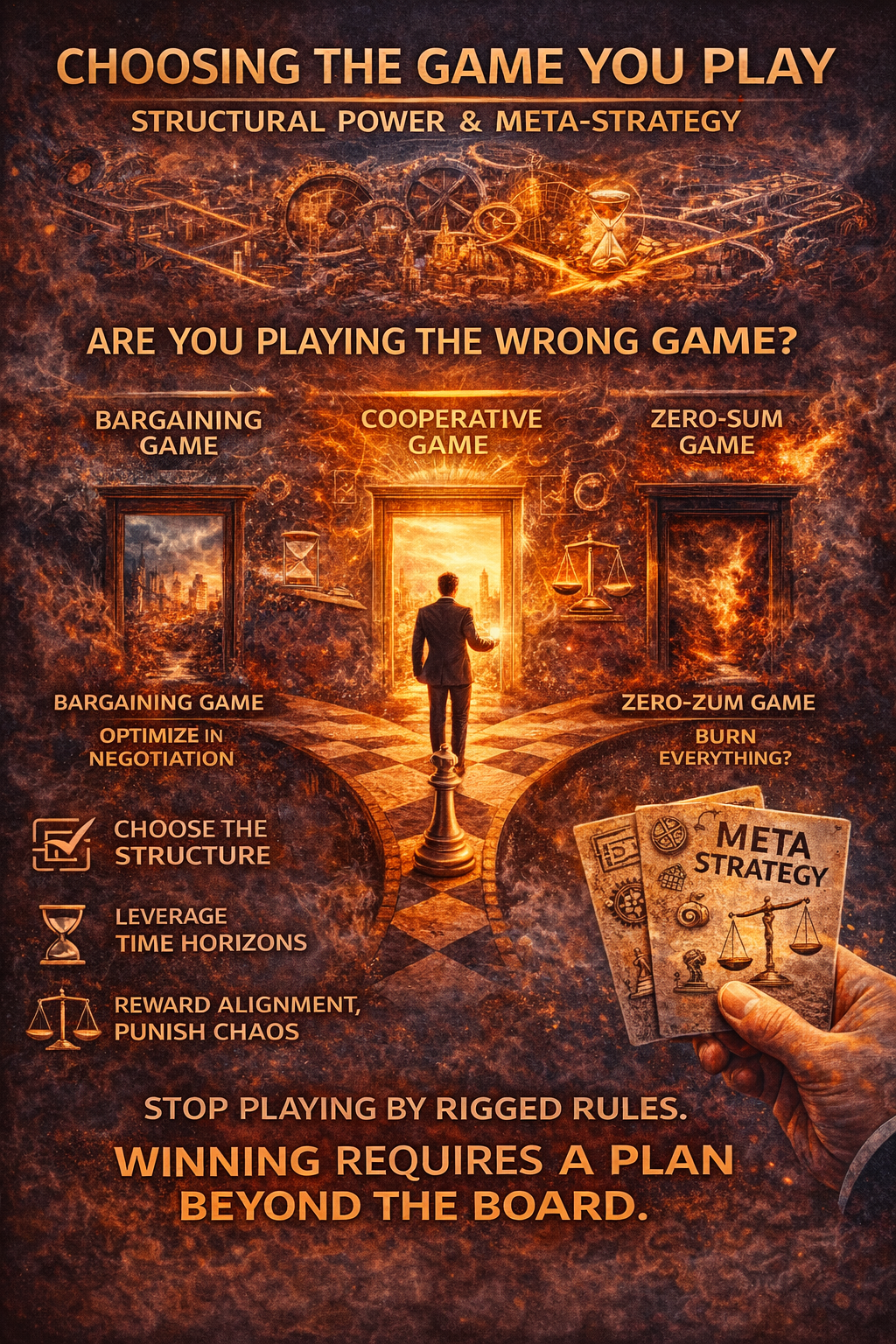

After thirteen parts of dissecting payoff matrices, logistical traps, and evolutionary stability, a single truth remains: Most people lose not because they are irrational, but because they are playing the wrong game.

We are taught from childhood that effort, intelligence, and morality lead to success. But game theory reveals that these are merely moves within a structure—and if the structure is broken, the moves are irrelevant. Most people spend their lives in a Bargaining Game they mistake for a Cooperative one, or worse, they treat a Repeated Game (like a career or a marriage) as a One-Shot sprint. They optimize for the “win” of the moment while burning the reputation and the “logistics tail” required for the marathon.

The Structural Reality of Power

In this series, we have seen that power is not persuasion; power is the ability to walk away without collapsing. Before skill or intelligence ever enter the room, the outcome is often decided by your BATNA (Best Alternative to a Negotiated Agreement). Effort fails without structural leverage because, without an exit option, you are not a negotiator—you are a hostage.

This is why “being right” can be a death sentence. In complex systems, winning is often evidence that your map is broken. Whether it is the Winner’s Curse in an auction or an AI agent over-optimizing for a narrow metric, “winning” frequently means you were the most optimistic person in the room about a value that doesn’t exist. Success in a broken system is merely a signal that you have more “sunk costs” than your rivals, making you the easiest target for a Hold-Up.

Why Systems Stay Broken

If these failures are so obvious, why do they persist? Because systems don’t reward what is good—they reward what survives. We see toxic environments and “Hawk” behaviors endure not because people are blind to them, but because of Evolutionary Stable Strategies. In a world of “Doves,” a “Hawk” thrives. Knowledge doesn’t fix a toxic culture; only changing the payoff matrix does. Until the cost of betrayal is higher than the reward of cooperation, the “bad” behavior remains the mathematically optimal move for the individual.

The Limits of Cooperation

We often hear that cooperation is the “better” way to live. Game theory is more cynical: Cooperation is optimal only when the future exists. Through Tit-for-Tat, we see that being “nice but provocable” works only when there is a “Shadow of the Future”—the certainty that you will meet again. When the time horizon shrinks, or when “noise” and bad intelligence create a “hallucinated map,” cooperation becomes suicidal. It invites exploitation and triggers “death spirals” where both sides burn the board rather than concede a single point.

The End of the Game

This is how the most significant games—from corporate collapses to the tragedy of war—actually end. Most games don’t end with winners; they end when the board breaks. When the cost of surrender (physical or cultural extinction) exceeds the cost of total resistance, the game moves from a “Bargaining Equilibrium” to a “Survival Game.” At that point, victory is impossible; there is only exhaustion or collapse.

AI only accelerates these failure modes. By replacing human reflection with algorithmic speed, we risk Fast Collusion and “flash crashes” where agents optimize for engagement or profit at the expense of system stability. In an agentic future, the “Alignment Problem” isn’t just about AI being “good”—it’s about ensuring the AI isn’t playing a Zero-Sum Game we didn’t even know existed.

The Final Move: Choosing Your Exit

If you take one thing from this series, let it be this: Outcomes are shaped by structure, not intention.

You should no longer strive to be “smarter” or “harder-working” within a rigged matrix. Instead, you must become a Meta-Game Designer. Stop optimizing your moves and start choosing your games.

Never make your assets 100% specific to a single partner or platform; if you can’t exit, you can’t negotiate.

Ignore “Cheap Talk” and look only for “Credible Signals” (actions that cost the sender something to lie about).

Stop playing one-shot games with people who have shorter time-horizons than you.

Most people lose the “game of life” not because they lack intelligence, but because they expend their cognitive energy trying to outsmart opponents who simply have different incentives. They treat strategy as a series of clever maneuvers or persuasive speeches. But true leverage doesn’t come from persuasion; it comes from alignment.

The first pillar of this shift is Designing for Honesty. Instead of relying on constant vigilance to prevent “cheating,” you must change the rules so that an opponent’s own rational selfishness forces them to assist you. Take the “Broken Game” of hourly billing: a contractor is mathematically incentivized to work slowly to maximize their billable hours, while the client is incentivized to want the job done yesterday. It is a structural conflict. By “flipping” the matrix to a Value-Based Contract, the contractor’s best move becomes efficiency. You haven’t changed their personality; you’ve simply engineered a world where their greed serves your goals.

However, even the most elegant design requires a Final Reality Check. A perfect game on paper is often the first thing to break in the wild because you are always playing an Open Game. You must account for the “Table-Flippers”—players who are driven by spite or ideology rather than money—and the “Macro-Shifts” that can rewrite a market’s incentives overnight. In a world of hallucinated maps and incomplete information, you cannot build for a static, perfect Nash Equilibrium. You must build for Robustness. A robust system is one that doesn’t shatter when players act irrationally or when “Hawk” strategies inevitably invade the ecosystem.

To ensure this robustness lasts, you must use the Time-Horizon Anchor. Any system that rewards short-term wins is an open invitation for a “Hold-Up”—where a partner burns the relationship for a quick payout. To prevent this, you must design rules that make the Long-Term Repeated Game the only one worth playing. When the payoff for the next 100 rounds of cooperation is visibly higher than the one-time profit of betrayal, the player will stay. You anchor their loyalty not in their character, but in their math.

The Strategic Verdict

The single unifying lesson of this 14-part journey is that outcomes are shaped by structure, not intention.

If you have internalized this, you are no longer a pawn. You stop asking, “What move should I make?” and start asking, “How should this game be built so that everyone’s best move leads to the outcome I want?” You stop trusting words and start trusting costs. You realize that optimizing for a “win” inside a losing structure is a fool’s errand; instead, you exit the structure entirely.

Game theory is not a crystal ball—it is a stress test. It shows you exactly where a structure is destined to crack under the pressure of human incentive. If this series has worked, you will never again make the mistake of trusting a person’s promises over their payoffs. When you see a failing system, you will stop blaming the people involved and start looking for the matrix that makes their “bad” behavior rational.

The most important move you will ever make is not how you play the hand you’re dealt—it is the decision to get up and find a better table.

While the preceding chapters focused on the intuition and strategic architecture of our reality, some may find themselves wanting to peak behind the curtain at the machinery that makes these conclusions possible. For those who seek the mathematical rigour that prevents game theory from devolving into mere rhetoric, the following appendix serves as a bridge. This isn’t about complexity for complexity’s sake; it is about providing the formal translation layer required to turn high-stakes observations into solvable models. If the main text taught you how to see the game, this section provides the syntax for how those games are actually written down.

Appendix A: The Formal Foundations of Game Theory

Purpose of This Appendix

The main text emphasizes intuition, structure, and real-world application. This appendix provides the minimum formal machinery required to:

Read academic or technical game-theory material.

Translate real situations into solvable models.

Understand why the conclusions in the main text are provable, not rhetorical.

No proofs are required to use game theory, but familiarity with these tools prevents misapplication.

A1. Formal Definition of a Game

A (normal-form) game is defined by the triple:

$$G = (N, S, U)$$

Where:

$N$ = set of players, $N = {1, 2, \dots, n}$

$S$ = strategy profiles, where each player $i$ has a strategy set $S_i$

$U$ = payoff functions, where $U_i : S_1 \times S_2 \times \dots \times S_n \rightarrow \mathbb{R}$

A strategy is a complete contingent plan, not a single move. This formalism underlies all models discussed in the main text, including war, bargaining, platforms, and AI agents.

A2. Dominant Strategies and Strategic Stability

A strategy $s_i \in S_i$ is strictly dominant if:

$$U_i(s_i, s_{-i}) > U_i(s’i, s{-i}) \quad \forall s’i \neq s_i, \forall s{-i}$$

Meaning: it yields a higher payoff regardless of what others do. If every player has a dominant strategy, the outcome is structurally locked. This is why the Prisoner’s Dilemma produces cooperation failure without appeal to psychology.

A3. Nash Equilibrium (Formal Definition)

A Nash Equilibrium is a strategy profile $s^$ such that: $$U_i(s^i, s^{-i}) \ge U_i(s_i, s^_{-i}) \quad \forall i, \forall s_i \in S_i$$

Interpretation: No player can improve their payoff by unilaterally deviating. It is a state of strategic mutual best-response. Note that a Nash Equilibrium does not imply fairness or efficiency; it only implies stability.

A4. Mixed Strategies (Why Randomness Appears)

In some games, no pure-strategy equilibrium exists. A mixed strategy assigns probabilities to strategies:

$$\sigma_i \in \Delta(S_i)$$

Where $\Delta(S_i)$ is the probability simplex over strategies. Expected payoffs are computed as:

$$\mathbb{E}[U_i] = \sum_{s \in S} \left( \prod_j \sigma_j(s_j) \right) U_i(s)$$

Mixed strategies explain deterrence credibility, unpredictability in conflict, and market “noise” that is actually equilibrium behavior.

A5. Extensive-Form Games & Backward Induction

Many real games are sequential, not simultaneous. An extensive-form game includes a game tree and timing of moves. Backward Induction solves the game from the end backward:

Identify optimal final moves.

Infer rational earlier moves.

Collapse the tree.

This is essential for bargaining and war escalation.

A6. Incomplete Information & Bayesian Games

When players lack full information, games become Bayesian. Each player has a type $\theta_i$ and beliefs $p(\theta_{-i})$. Expected utility becomes:

$$\mathbb{E}[U_i | \theta_i]$$

This formalizes signaling, the winner’s curse, and principal-agent problems.

A7. Repeated Games & Folk Theorem

In infinitely repeated games, cooperation can emerge because reputation becomes a payoff component. The Folk Theorem mathematically grounds the idea that any payoff above the one-shot Nash equilibrium can be sustained if players are patient enough and future punishment (like Tit-for-Tat) is credible.

A8. Mechanism Design

Mechanism design reverses the problem: design rules such that selfish behavior produces desired outcomes. A mechanism is incentive compatible if:

$$\text{Truthful reporting} \in \arg\max U_i$$

This is the formal backbone of platform auctions and contract design.

A9. Limits of Formalism

Formal models assume stable preferences and consistent reasoning. When these fail, the model outputs false equilibria or brittle strategies. This appendix should be used as a translation layer, not a substitute for judgment.

Closing Note for Readers

Mastery lies in knowing when to formalize, when to abandon the model, and when to redesign the game entirely.